Embedded QR Codes via the AI Horde

Around the same time last year, the first controlnet for generating QR codes with Stable Diffusion was released I was immediately enamored with the idea and wanted to have it ASAP as an option on the AI Horde. Unfortunately due to a lot of extenuating circumstances [gesticulates wildly] I had neither the time, nor the skills to do it myself, nor the people who could help us onboard it. So this fell on the wayside while way more pressing things were being developed.

Today I’m very excited to announce that I have finally achieved and deployed it to production! QR code generation via the AI Horde is here!

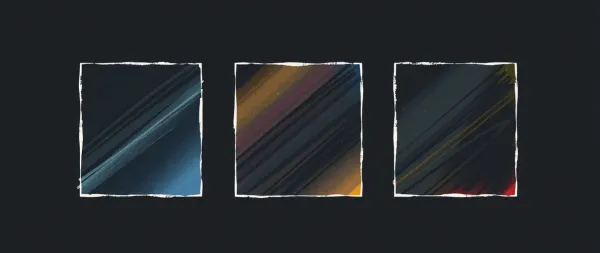

To use is fairly simply, assuming your front-end of choice supports it. You simply provide the text that you want represented as a QR code and the AI Horde will generate a QR code, and then using controlnet, will generate an image where the QR code is embedded into it, as if it’s part of the drawing. You can scan the examples below to see it in action.

You’ll notice that unlike some of the examples you’ll find online elsewhere, the QR code we generate is still fairly noticeable as a QR code, especially when zoomed out, or at a distance. The reason for this is that the more fitting you make to the image, the less likely it is that the QR code is scannable. The implementation I followed to achieve this result is specifically tailored to sacrifice “embedding” for the purpose of scannability.

So when you want to generate QR codes, you need to keep in mind that this is a very finicky workflow. The diffusion process can easily “eat” or modify some components of the QR code so that the final image is not readable anymore. The subject matter and model used matters surprisingly much. Subjects which are somewhat noisy (such as the brain prompt in the featured image above) tend to give enough to the model to work with to reshape that area in a way that creates a QR code. Wheres no matter how hard I tried, I couldn’t get it to generate a QR code with an anime model and an anime woman in the subject.

Along with the basic option to provide the QR Code text, you can also customize some more areas from it. For example you can choose where the QR code will be placed in the image. By default we’ll always display it in the center, but sometimes the composition might be easier if you choose to place it on the side, or to the bottom. You can choose a different prompt for the anchor squares, increase or decrease the border thickness, and more. Your front-end should hopefully be explaining these options to you.

If you want to try and make some yourselves right now, I’ve added the necessary functionality to my Lucid Creations front-end already, so feel free to give it a try right now.

Continue reading further to get some development details.

The road leading to me making this feature available was fairly long. Other than all the other priorities I had for the horde, we also had the misfortune that one of our core contributors on the backend/comfyUI side, went suddenly missing at the end of summer. As I am still more focused the middleware/api and infrastructure (plus so much more, halp!) and Tazlin is focused on efficiency, and code maintenance & quality, we didn’t have the necessary skills to add something as complex as QR code generation.

Once it was clear that our contributor wasn’t coming back and nobody else was stepping up to help, I finally accepted that if I want it done, I have to learn to do that part myself as well. So in the past few months I embarked on a journey to start adding more and more complex comfyUI workflows. First came Stable Cascade which required me to build code which can load 2 different model files at the same time. Then Stable Cascade Remix which required that I wrangle up to 5 source images together.

Note that I’m mostly re-using existing fairly straightforward ComfyUI workflows which do these tasks. I don’t have the bandwidth to learn ComfyUI itself that much. But the work of making said workflows function within the horde-engine with payloads that are send via the AI Horde REST API is quite a complex amount of work on top of those. As I hadn’t built this “translation layer”, I was avoiding that area of the code until now, and this work helped me build up enough knowledge and confidence to be able to pull of translating a much much more complex ComfyUI workflow like the QR codes.

So after many months, I decided it was finally the time to tackle this problem. The first issue is getting an actually good QR Code ComfyUI workflow. Unlike the previous workflows I used, it’s surprisingly difficult to find something that works immediately. Most simple QR Code workflows both required that one generates the QR image externally and generated mostly unscannable images.

I was fortunate enough to run into this excellent devlog by Corey Hanson who not only provided instructions on what works and what doesn’t for QR codes, but even provided a whole repository with prebuilt ComfyUI workflows and a custom node which would also generate a QR code as part of the workflow. Perfect!

Well, almost perfect. Turns out the provided ComfyUI workflows were fairly old, and at the rate GenerativeAI progresses even a couple of months means something can easily be too stale to use. On top of that they were using a lot of extra custom nodes in their examples that didn’t parse, which a ComfyUI newbie like me had to untangle. Finally those workflows were great, especially for local use, but a bit overkill for the horde usage.

So first order of business was to understand, then simplify the workflow to just do the bare needed to get a QR code. Honestly it took me a bit of time to simply get the workflow running in ComfyUI itself and half-way understand what all the nodes were doing. After that I had to translate it to the horde-engine format, which by itself required me to refactor how I parse all comfyUI workflows to make it more maintainable in the future.

Finally QR codes require a lot more potential text inputs, which I didn’t want to start explicitly storing in the DB as new columns as they’re used only for this specific purpose. So I had to come up with a new protocol for sending an open ended amount of extra text values. Fortunately I had already the extra_source_images code deployed so I just copied part of the same logic to speed things up.

And then it was time for unit tests and the public beta and all the potential bugs to fix. Which is when I realized that the results on SD 1.5 models were a bit…sucky, so I went back to ComfyUI itself and actually figured out how to make the workflow work with SDXL as well. The results were way more promising.

Unfortunately while the SDXL QR Codes are way nicer, the requirements to generate them are almost tripled compared to SD 1.5. Not only does one need to run SDXL models, but SDXL controlnets are almost as big as the models themselves. The QR code controlnet is 5G on its own, and all that needs to be loaded in VRAM at the same time as the mode. All this means that even middle-range GPUs struggle to generate SDXL QR codes in a reasonable amount of time. This meant that I also had to adjust the worker to give the option for people serving SDXL models to skip SDXL controlnet, and also properly route this switch via the AI Horde.

Nevertheless, this an areas that makes the AI Horde shine, as those with the necessary power, can support those who need it. Most people will find it really hard or frustrating to generate even a single QR code, never-mind an SDXL one, only to discover that it’s unscannable, but through the horde they can easily generate dozens with very little expertise needed and find the one that works for them.

So It’s been a long journey, but it’s finally here, and the expertise I gained by achieving it also means that I now have enough knowledge to start adding more features via ComfyUI. So stay tuned to see more awesome workflows on the AI Horde!